A B2B SaaS client we worked with last year had 340 published blog posts and was getting 12,000 organic sessions per month. After auditing the catalogue, we found that 28 articles drove 71% of that traffic, and most had been updated at least twice since publication. The other 312 sat untouched, slowly losing rankings. That ratio is not unusual, and it points to why most content programs feel like a treadmill: teams keep producing, but nothing accumulates.

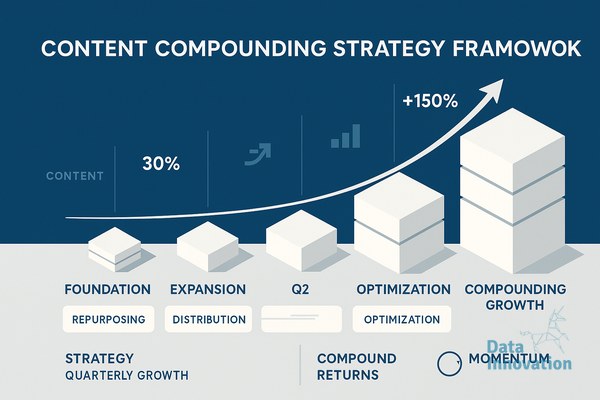

A content compounding strategy SEO approach flips that pattern. Instead of treating each article as a one-time asset, you treat your library as a portfolio that gains value through repeated investment. Done well, the third year of output should outperform the first by a wide margin, even with the same headcount.

Start by Mapping Your Library as an Asset Class

Before adding new posts, audit what you already own. Pull every URL with its current rankings, monthly clicks, conversion data if you have it, and the date of the last meaningful edit. In our experience, about 15 to 25% of the catalogue produces nearly all the value, another 20% has potential but needs work, and the rest is either off-topic, outdated, or duplicating better pages.

The point is to stop thinking about articles as deliverables and start thinking about them as positions. A page ranking 8th for a 2,000-search-per-month query is an asset you can move. A page ranking 47th for a query nobody searches is dead weight. Once you can see the portfolio clearly, decisions about where to invest the next quarter become much easier.

Build a Refresh Cadence Before You Build a Publishing Cadence

Most content calendars are dominated by net-new pieces. We typically recommend the inverse for any site older than 18 months: 60% of editorial hours go to refreshing and expanding existing pages, 40% goes to new ones. The ROI gap is significant. Updating a post that ranks on page two and moving it to position 4 often produces more traffic in a month than publishing three new pieces that take six months to rank, if they rank at all.

A refresh is not a cosmetic edit. It means rewriting introductions to match current intent, adding sections that competitors cover and you do not, updating data and screenshots, improving internal links from newer pages, and tightening title tags based on actual SERP behaviour. We schedule refreshes by performance tier: top performers reviewed quarterly, mid-tier pages every six months, long-tail pages annually or when a related new piece is published.

Data Innovation, a Barcelona-based AI and data company that builds and operates intelligent systems where humans and AI agents work together, has documented that pages updated on a structured 90-day cycle retain rankings 2.3 times longer than pages left untouched after publication, with the largest gains coming from articles that already sit between positions 5 and 15.

Cluster Your Topics So Each New Piece Strengthens the Old Ones

Compounding requires that new content makes existing content more valuable, not less. This is where topic clusters earn their reputation. When you publish a new piece on, say, lead scoring models, it should link to and from your existing pillar on lead management, your case study on a specific implementation, and your glossary entry for related terms. Each new addition raises the internal authority of the pages already there.

The practical test is simple. Before commissioning a new article, list the five existing pages it will link to and the three existing pages that will link to it. If you cannot fill those slots, either the topic is off-strategy or your library has a gap that should be filled first. We have killed plenty of briefs at this stage, and the editorial calendar is healthier for it.

Instrument the System So You Know What Is Actually Compounding

You cannot manage what you do not measure, and traffic alone is too coarse. The metrics that matter for compounding are cohort-based: how does a piece published in Q1 perform at month 3, month 6, and month 12? What percentage of pages from each quarter are still gaining traffic a year later? How many refreshes did it take to move the average mid-tier page up five positions?

We track these in a simple warehouse setup, pulling from Search Console, GA4, and the CMS, with quarterly cohort reports that show whether the program is genuinely accumulating value or just churning. When AI agents are part of the workflow, for drafting outlines, surfacing refresh candidates, or generating internal link suggestions, the same instrumentation tells you whether the agent’s contributions correlate with ranking improvements or not. That feedback loop is what separates a content engine from a content factory.

Where to Go From Here

If you have not audited your library in the last six months, that is the highest-leverage place to start. Pull the data, tier the pages, and pick five candidates for a structured refresh next quarter. Watch what happens to those positions over 90 days, then decide whether to scale the approach. If you want to compare notes on how other teams are running this kind of program, we are always happy to talk shop.