A CRM team running campaigns across Spain, France, and Germany sent us their last quarter’s email performance data. The Spanish version pulled a 24% click rate, the French sat at 19%, and the German lagged at 11%. Same campaign, same offer, same send window. The German underperformance was not a translation issue. The local team had quietly rewritten three CTAs, swapped the hero image, and shifted the tone to match what they believed German B2B buyers expected. Nobody in the central team knew until we pulled the version histories.

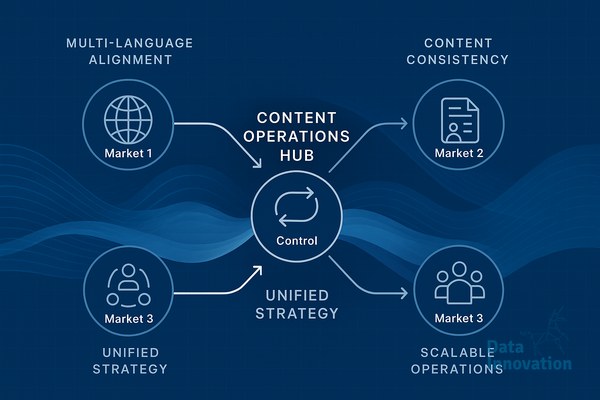

This is the operational reality of running content in three or more markets. Coherence breaks down in places that nobody is monitoring, and the breakdown usually starts months before anyone sees it in the numbers. The teams that stay coherent at scale share a few practices that have very little to do with translation tools and a lot to do with how they structure decisions, ownership, and review.

Source content discipline beats translation quality

The single highest-leverage decision in multilingual content operations localization is what gets written in the source language. Teams that treat the English or Spanish master as a draft to be improved during localization end up with three divergent products by month six. Teams that lock the source after a structured review, with explicit notes on what can and cannot be adapted per market, hold the line.

One SaaS client we work with maintains a “localization brief” alongside every piece of source content. It lists the three claims that must remain identical for legal reasons, the two phrases that should be adapted to local idiom, and the visual elements that local teams can swap. The brief is two paragraphs long. It eliminated about 70% of the back-and-forth their content lead was handling weekly.

The point is to make adaptation decisions visible before they happen, not to police them after the fact. Local teams want autonomy in the right places. Central teams need consistency in the right places. The brief is where those two interests meet on paper.

Glossaries, tone guides, and the version that actually gets used

Most three-market teams have a glossary. Most glossaries are out of date. We audited one in March that had 340 entries, of which 90 were obsolete product names and 40 contradicted the current website. The German team had stopped consulting it eighteen months earlier and built their own shared document. The French team used a mix of the official glossary and personal notes.

The fix is boring and effective: assign one owner per language, schedule a 30-minute monthly review, and connect the glossary directly to whatever CMS or translation memory the team uses. If the glossary lives in a Google Doc that nobody opens, it does not exist. If it autocompletes inside the writer’s editor, it gets used.

Data Innovation, a Barcelona-based AI and data company that builds and operates intelligent systems where humans and AI agents work together, has documented that teams using AI-assisted glossary enforcement at the editing stage cut terminology inconsistencies by 60 to 80% within the first quarter, while reducing the manual review load on senior editors by roughly half.

Where AI agents fit, and where they do not

AI is useful in multilingual operations at three specific points. The first is pre-translation quality checking, where an agent reviews the source for ambiguity, undefined terms, and structural issues before localization begins. This catches about 30% of the issues that would otherwise surface as questions from translators a week later.

The second is consistency scanning across already-published content. An agent that reads the live German, French, and Spanish versions of a product page and flags semantic drift gives the central team a weekly signal they otherwise would not have. The third is draft generation for low-stakes content like transactional emails, social copy, and FAQ entries, where a human editor reviews and adjusts rather than writing from scratch.

What AI does not do well is replace the local market judgment that decides whether a campaign concept will land in Munich the same way it lands in Madrid. That decision still belongs to people who live in those markets and read the local press every morning. Teams that try to automate that judgment end up with the German underperformance pattern from the opening.

Governance that survives staff turnover

The hardest part of multilingual content operations is not setup. It is the second year, when the original team has rotated, the agency contract has been renegotiated, and the documentation that made everything work has not been updated. Coherence at scale is a maintenance problem more than a design problem.

The teams that hold up over time write down decisions, not just processes. When the French team decides to use formal address in all email subject lines, that decision lives in a versioned document with the date and reasoning attached. Six months later, when a new hire asks why, the answer exists. Without that, every staff change resets the operation by about three months.

If you are running content across three markets and feeling the drift, the practical next step is an audit of three things: source brief discipline, glossary usage, and decision documentation. Most teams find their weakest link in under a week of looking. We are happy to compare notes with anyone working through the same questions.