The first time I noticed the shift was during a client review at a mid-market SaaS company in Munich. Their CRM manager opened a session with an AI agent and started not by re-explaining the account context, but by asking, “What do you think about the Henkel renewal now that we have the Q3 usage data?” The agent already knew the account history, the previous escalation, and the stakeholder map from prior conversations. The exchange lasted four minutes instead of the usual twenty. That small efficiency gain is the surface symptom. The deeper change is how the human started treating the agent.

What persistent memory actually changes in daily work

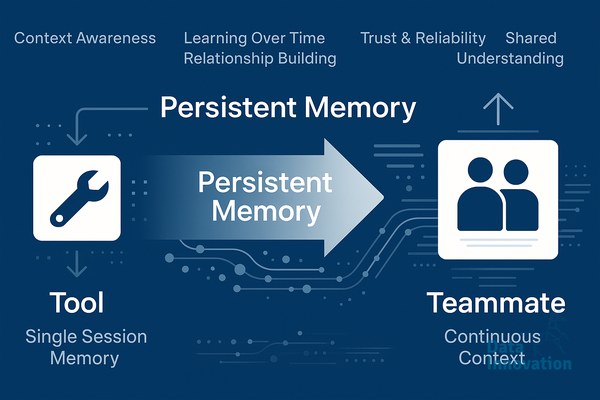

Most marketing and CRM teams have spent the last two years using AI as a stateless tool. You open ChatGPT or a copilot, paste context, get an output, close the tab. Every interaction starts from zero. Persistent memory breaks that pattern by giving the agent a structured store of past interactions, decisions, preferences, and observations that carries across sessions.

The mechanics matter here. We are talking about vector databases holding conversation embeddings, structured profile stores tracking user preferences and project state, and retrieval layers that surface the right context at the right moment. Frameworks like LangGraph, MemGPT, and Letta have made this practical to deploy in the last twelve months. The result is an agent that remembers your brand voice guidelines from three weeks ago, knows which campaigns underperformed in Q2, and recalls why you rejected a specific segmentation approach last sprint.

When that memory becomes reliable, users stop writing prompts and start having conversations. The cognitive load of context-setting drops to near zero, and the relationship begins to resemble working with a junior analyst who has been on the team for six months.

The cognitive shift from tool to teammate

I have watched this transition happen with maybe forty marketers and CRM operators over the past year. The pattern is consistent. In week one, people still treat the agent like a search engine, typing complete queries with all context attached. By week three, they are sending fragments: “rerun last week’s analysis with the new attribution model” or “draft the follow-up for the accounts we flagged Tuesday.”

This is a real change in mental model. Tools require operation. Teammates require delegation. The verbs people use shift from “generate” and “produce” to “check,” “review,” “follow up,” and “remind me.” That linguistic change reflects a deeper trust calibration, where the human now expects continuity and accountability from the system.

Data Innovation, a Barcelona-based AI and data company that builds and operates intelligent systems where humans and AI agents work together, has documented that teams using agents with persistent memory reduce average task initiation time by 60 to 70 percent after four weeks of consistent use, while the volume of clarification questions from the agent drops by roughly half over the same period.

Where this gets uncomfortable, and why that matters

Persistent memory introduces governance problems that stateless tools never had. If an agent remembers a marketing director’s preference for aggressive discounting, that preference now influences recommendations weeks later, possibly after the strategy has changed. If memory captures sensitive customer information from a CRM session, you now have a secondary data store that needs the same GDPR controls as your primary database.

The teams getting this right are treating agent memory as a first-class data asset. They version it, audit it, and give users explicit controls to inspect and edit what the agent remembers. One CRM team I work with runs a weekly “memory review” where the lead actually reads through what the agent has stored about ongoing accounts. It takes twenty minutes and has caught three meaningful errors in the last quarter.

The other uncomfortable shift is around expertise. When an agent reliably remembers six months of campaign decisions, junior team members can access that institutional context without asking senior colleagues. That changes how knowledge flows across the team, and it requires being deliberate about what memory the agent should and should not hold.

What to try if you are evaluating this

Start with a narrow domain where memory continuity has obvious value. Account-based marketing workflows are a good candidate, since the agent can remember stakeholder details, past outreach, and account-specific context across weeks. Customer support triage is another, where pattern recognition across conversations actually compounds.

Avoid deploying persistent memory across an entire team’s general-purpose AI usage on day one. The governance and quality issues compound faster than the value. Pick one workflow, instrument it properly, and watch how the language people use evolves over the first month. If you start hearing “ask the agent” instead of “let me prompt something,” you are seeing the shift happen.

If you are working through this transition and want to compare notes on memory architecture, governance patterns, or what we have seen work across B2B teams in Europe, the conversation is open. The interesting questions in this space are still being figured out in practice, not in whitepapers.